Cerebras Systems is the most compelling pure-play AI infrastructure company approaching public markets. In six months, the company has transformed from a single-customer chip startup into a multi-hyperscaler platform with $10B+ in contracted revenue, validated by OpenAI, Amazon/AWS, Meta, Oracle, the U.S. Department of Energy, and the Government of India. We initiate coverage with an OUTPERFORM rating and a 2-year market cap target of $65B, with significant upside to $140B on a 5-year horizon.

| SNAPSHOT | |

| Current valuation | $23B (Series H, Feb 2026 — Tiger Global led, AMD participated) |

| Total capital raised | ~$4B+ across 8 rounds |

| IPO target | Q2–Q3 2026 | Nasdaq: CBRS |

| FY2024E revenue | ~$500M (535% YoY growth per PM Insights) |

| FY2026E revenue (est.) | $3.9B |

| Contracted backlog | $10B+ (OpenAI through 2028) |

| Key customers | OpenAI, Amazon/AWS, Meta, Oracle, IBM, Mistral, Perplexity, DOE, India |

| Inference capacity | 8+ datacenters, 40+ exaflops, 85% U.S.-based |

| Core technology | WSE-3: 4T transistors, 900K cores, 20x faster than GPUs |

40 days that changed everything

Between January and March 2026, Cerebras announced six transformative developments that collectively rewrote the investment thesis:

| Date | Catalyst |

| Jan 14 | OpenAI: $10B+ deal for 750MW of inference compute through 2028 — largest single AI hardware commitment to a non-Nvidia company |

| Feb 4 | Series H: Raised $1B at $23B valuation (184% increase in 5 months). Tiger Global led; AMD participated as strategic investor |

| Feb 12 | OpenAI Codex-Spark: First production AI model on non-Nvidia hardware. GPT-5.3-Codex-Spark runs at 1,000+ tok/s on WSE-3. 1M+ weekly active Codex users |

| Feb 20 | India: 8 exaflop national AI supercomputer with G42/MBZUAI/C-DAC under the India AI Mission. One of the largest AI infra investments in Asia |

| Mar 10 | Oracle: CEO Clay Magouyrk named Cerebras alongside Nvidia and AMD on Q3 FY26 earnings call. Oracle RPO quadrupled to $553B |

| Mar 13 | Amazon/AWS: Disaggregated inference (Trainium prefill + CS-3 decode) deployed on Amazon Bedrock. EXCLUSIVE to Bedrock. Amazon Nova models on Cerebras. AWS is first hyperscaler to commit |

The Amazon/AWS deal is particularly significant: AWS VP David Brown stated the result will be “inference that’s an order of magnitude faster and higher performance than what’s available today.” The solution is built on the AWS Nitro System, with Amazon Nova models running on Cerebras hardware, and Cerebras’ disaggregated inference architecture is exclusive to Amazon Bedrock. This embeds Cerebras into the distribution fabric of the world’s largest cloud platform.

Investment thesis: the five pillars

1. The inference TAM is exploding — and Cerebras owns the speed crown

Deloitte estimates inference will account for ~66% of all AI computation in 2026, up from ~50% in 2025. McKinsey projects 80% by 2027. The inference market is projected to grow from $106B (2025) to $255B by 2030. Cerebras delivers 2,600+ tokens/second on Llama 4 Scout versus ~130 for ChatGPT — a 20x speed advantage that is architecturally fundamental. This speed enables new categories: real-time voice agents, sub-second reasoning, interactive code generation, and agentic workflows.

2. OpenAI deal transforms the business — and is already in production

The $10B+ commitment through 2028 is now producing live product. GPT-5.3-Codex-Spark, launched February 12, is OpenAI’s first-ever production deployment on non-Nvidia hardware. Running at 1,000+ tok/s, it reduced per-client round-trip overhead by 80% and time-to-first-token by 50% — improvements that benefit ALL OpenAI models. Sam Altman personally teased the launch. Codex has 1M+ weekly active users.

3. Six hyperscaler relationships in 40 days

Cerebras now has chip-level or infrastructure-level relationships with OpenAI ($10B+ deal), Amazon/AWS (Bedrock exclusive, Trainium+CS-3 disaggregated inference), Meta (Llama API backend), Oracle (OCI infrastructure), IBM (watsonx), plus deep partnerships with Mistral, Perplexity, Cognition, and 15+ additional named customers across enterprise, government, and life sciences.

4. Sovereign AI creates a massive new revenue stream

Cerebras for Nations (launched Nov 2025) positions the company as the infrastructure partner for sovereign AI. The India 8 exaflop supercomputer (announced Feb 20) is one of the largest AI infra investments in Asia. The UK AI Minister personally endorsed Cerebras. India’s government is targeting $200B in total AI infrastructure investment over the next two years. The Condor Galaxy network with G42 was the prototype; now it’s scaling globally.

5. Architectural moat is real and widening

The WSE-3 uses the entire 300mm silicon wafer as a single processor: 4 trillion transistors, 900K AI cores, 44GB on-chip SRAM with 21 petabytes/second bandwidth — 1,000x faster than HBM4. Cerebras maintains a 5x speed lead over Nvidia Blackwell on GPT-OSS-120B. The DARPA “Fuse” project ($45M) with Ranovus for photonic interconnects could extend this lead. Groq, the most direct inference competitor, was acquired by Nvidia for $20B in December 2025 — removing it from the independent market.

Financial model

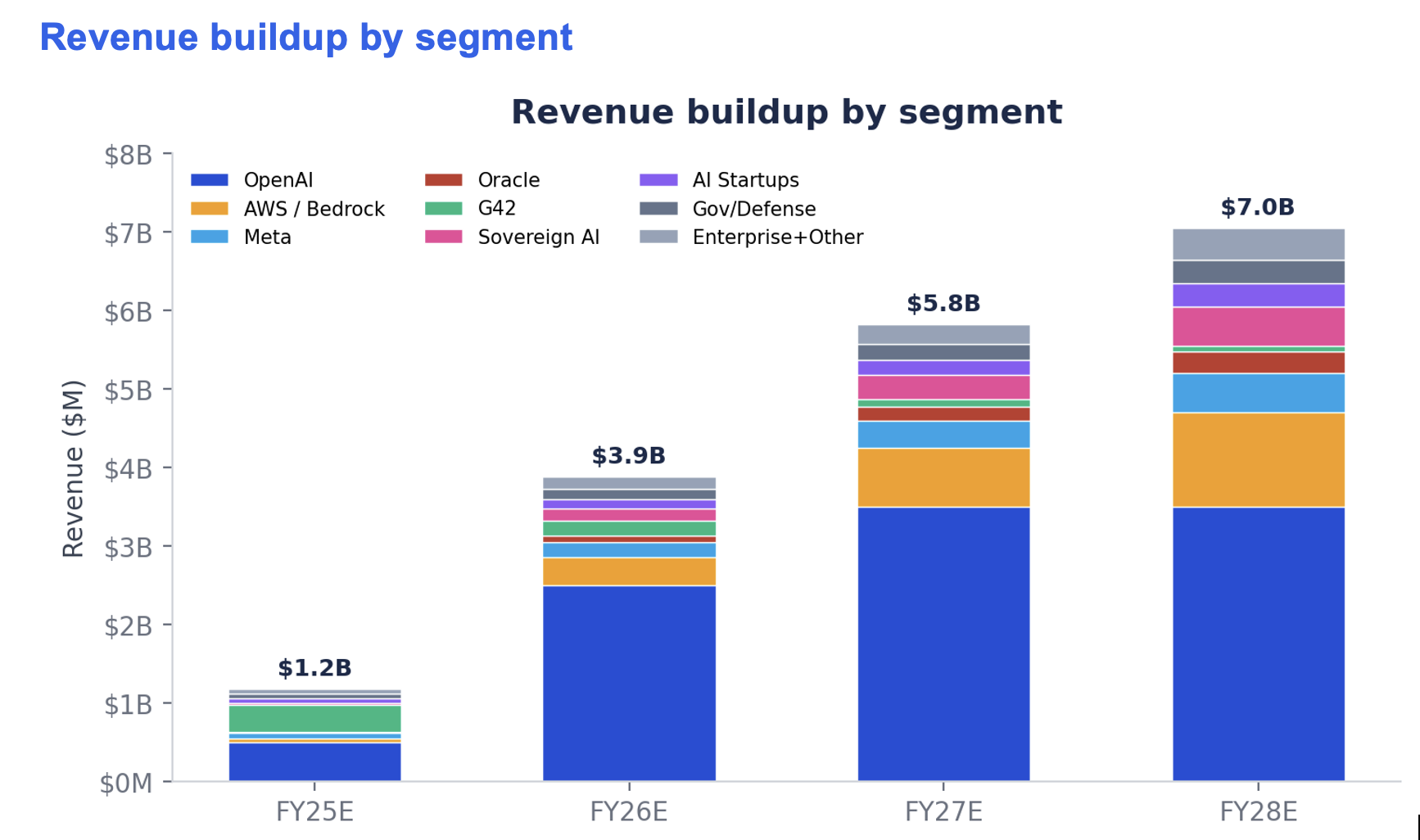

Revenue buildup by segment

| Segment | FY25E | FY26E | FY27E | FY28E | Notes |

| OpenAI | $500M | $2,500M | $3,500M | $3,500M | $10B+/3yrs |

| AWS / Bedrock | $40M | $350M | $750M | $1,200M | Exclusive |

| Meta | $75M | $200M | $350M | $500M | Llama API |

| Oracle (OCI) | $10M | $75M | $175M | $275M | Earnings call |

| G42 / Condor | $350M | $200M | $100M | $75M | Declining |

| Sovereign AI | $25M | $150M | $300M | $500M | India+UK |

| AI startups | $50M | $125M | $200M | $300M | Mistral etc |

| Gov / defense | $60M | $125M | $200M | $300M | DOE+DARPA |

| Ent + other | $60M | $150M | $250M | $400M | |

| TOTAL | $1,170M | $3,875M | $5,825M | $7,050M |

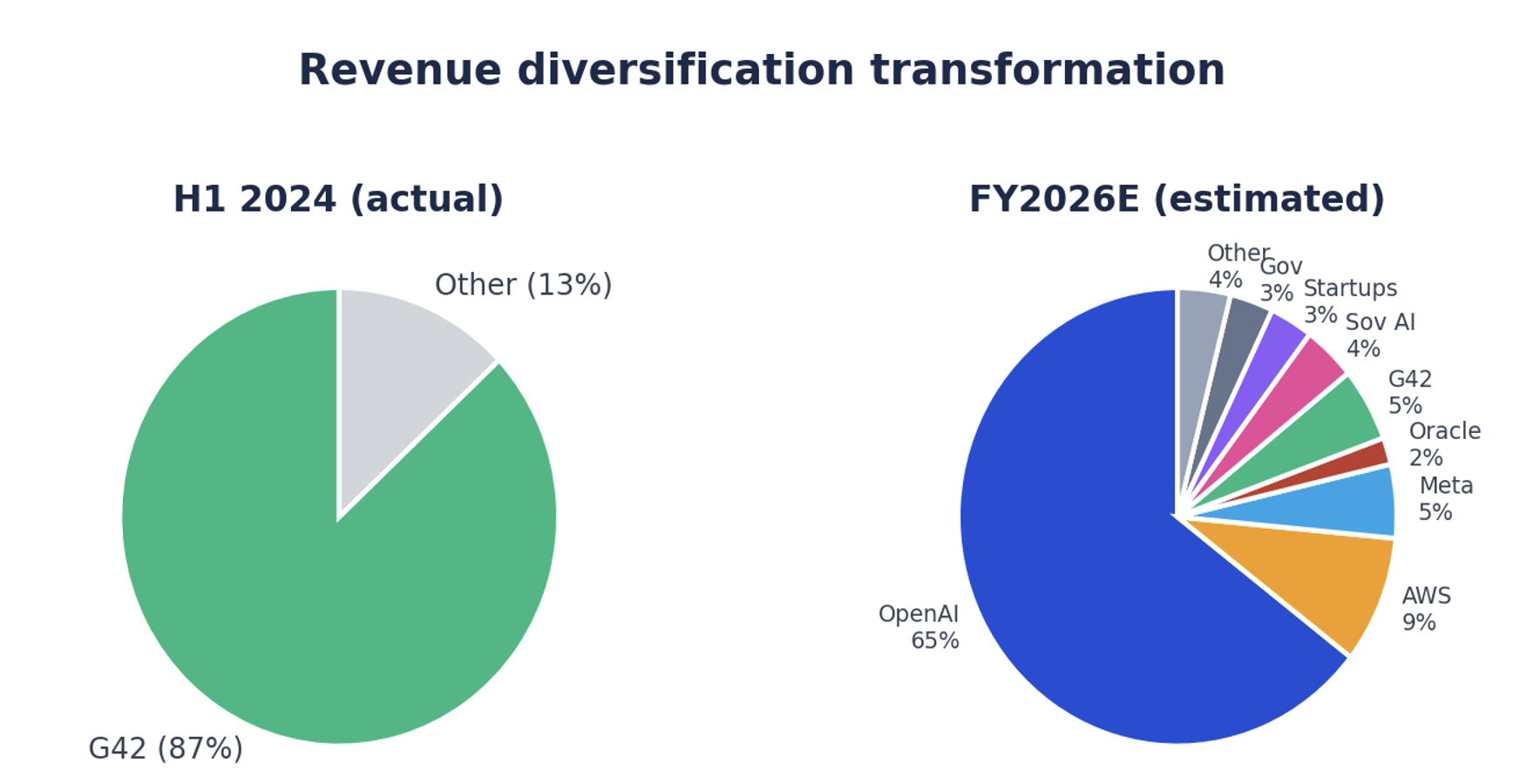

Revenue diversification transformation

In H1 2024, G42 represented 87% of revenue. By FY2026E, no single customer exceeds 65% and Cerebras has six hyperscaler-scale relationships. The Amazon Bedrock exclusive and Oracle OCI integration create two additional mega-channels that materially reduce single-customer dependency.

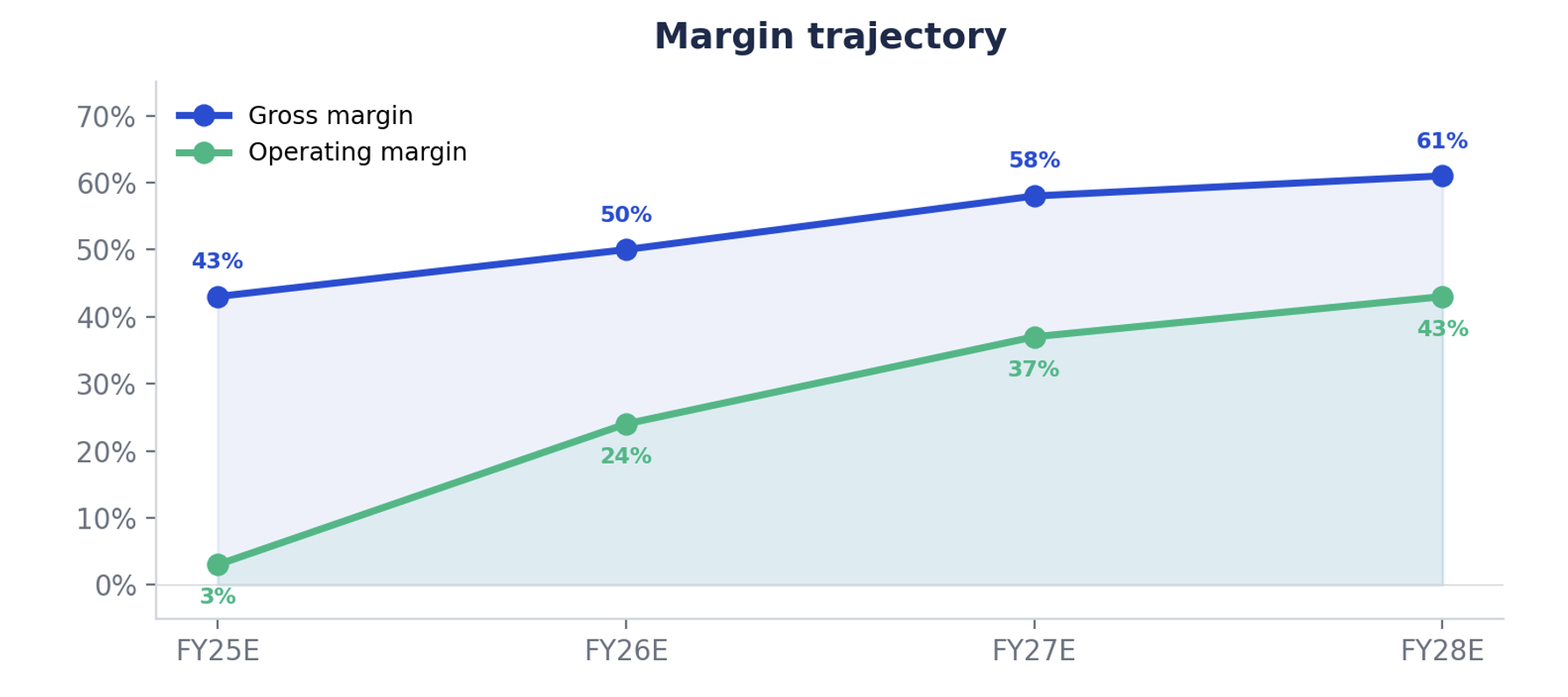

Profitability and margin trajectory

| Metric | FY25E | FY26E | FY27E | FY28E |

| Revenue | $1,170M | $3,875M | $5,825M | $7,050M |

| Gross margin | 42–45% | 48–52% | 55–60% | 58–63% |

| Operating margin | 0–5% | 20–28% | 33–40% | 40–46% |

| Est. adj. EBITDA | $25–$60M | $800M–$1.1B | $2.0–$2.4B | $2.9–$3.3B |

Margin expansion thesis: As revenue shifts from hardware (36% GM) to cloud inference-as-a-service (60–70%+ GM), profitability inflects rapidly. The AWS Bedrock integration is especially accretive — Cerebras provides silicon, AWS handles sales, billing, and customer acquisition. Oracle OCI follows a similar model.

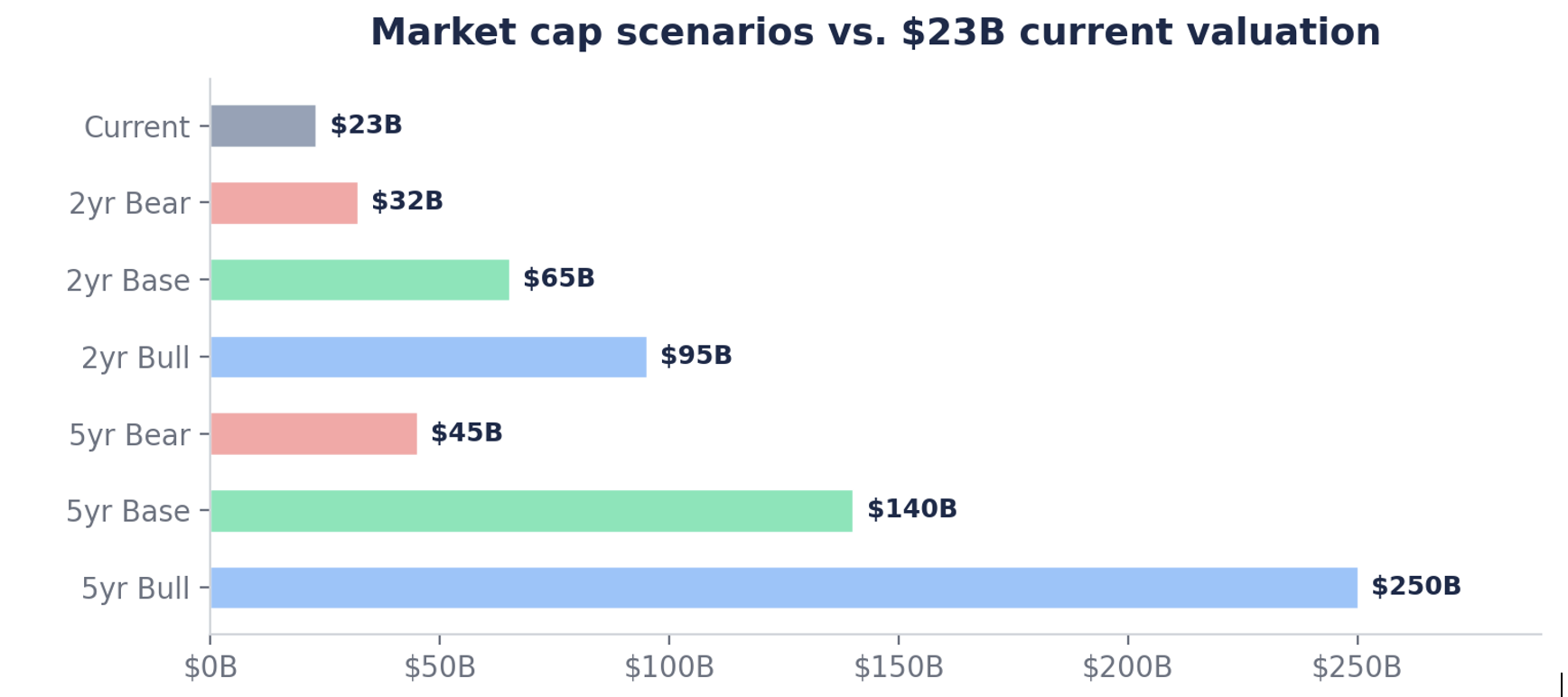

Valuation: where could the stock go?

| Bear | Base | Bull | |

| 2-year (Q2 2028) | $32B | $65B | $95B |

| 5-year (Q2 2031) | $45B | $140B | $250B+ |

| vs. $23B current | +39% / +96% | +183% / +509% | +313% / +987%+ |

| Key assumption | OpenAI under-delivers; Nvidia closes gap | Full ramp; AWS + Oracle scale; sovereign AI | De facto inference standard; additional hyperscalers |

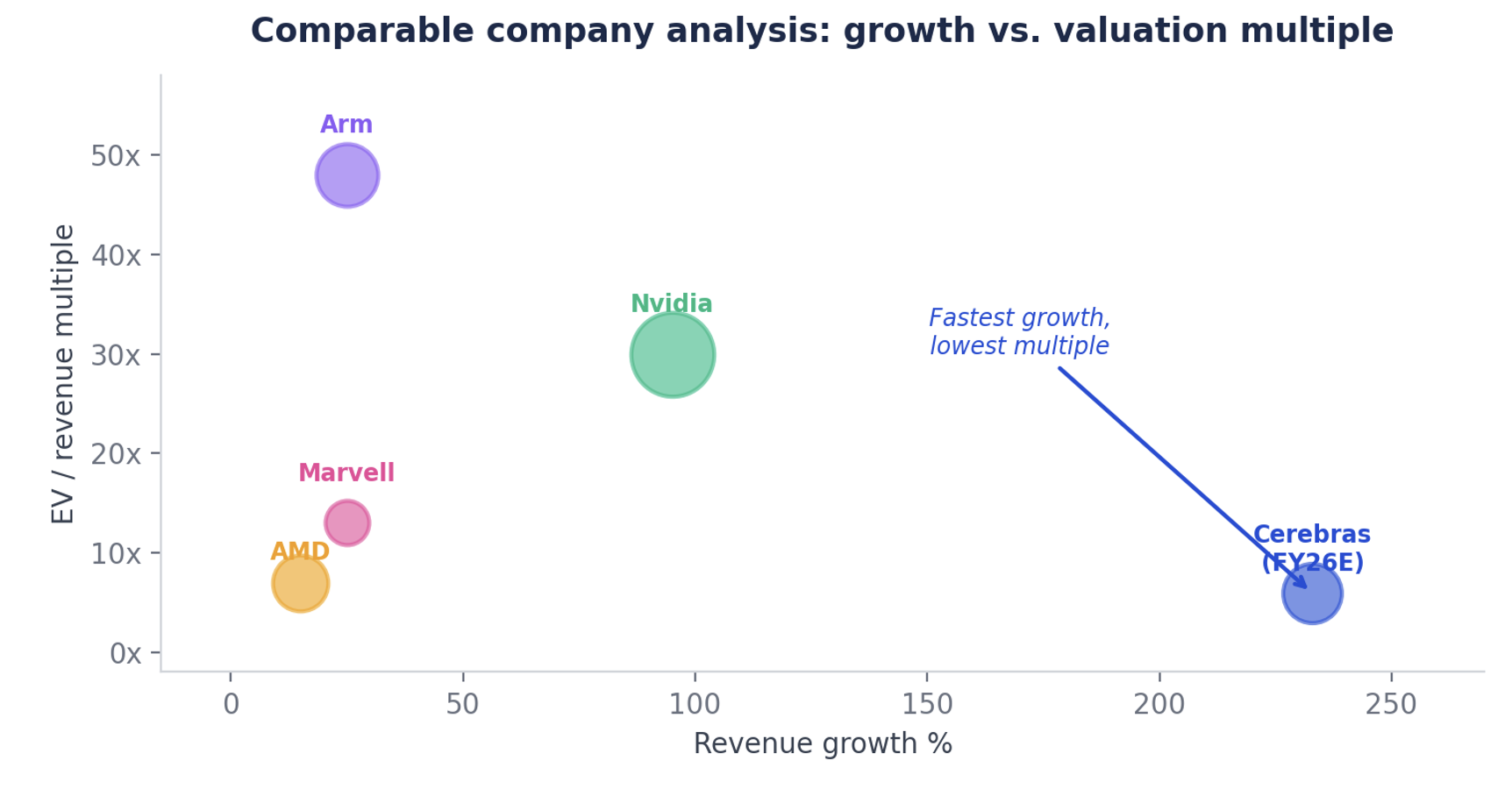

Comparable company analysis

| Company | Mkt Cap | LTM Rev | EV/Rev | Growth |

| Nvidia | $3.4T | $115B | ~30x | ~95% |

| Arm | $190B | $4B | ~48x | ~25% |

| AMD | $180B | $26B | ~7x | ~15% |

| Marvell | $80B | $6B | ~13x | ~25% |

| Cerebras (FY26E) | $23B | $3.9B est. | ~5.9x | ~233% |

At 5.9x FY2026E revenue, Cerebras trades at a fraction of Arm (48x) and Nvidia (30x) while growing 10–20x faster. If the public market assigns even 15x forward revenue on $3.9B, the implied market cap is $58B — a 152% return from current valuation.

Key risks

Customer concentration 2.0: OpenAI could represent 60–65% of FY2026 revenue. Mitigant: AWS Bedrock exclusive + Oracle OCI now create two additional mega-channels, materially reducing single-customer dependency vs. the G42 era.

Nvidia competitive response: Blackwell and Rubin GPUs will narrow the inference gap. Nvidia acquired Groq for $20B. Cerebras maintains a 5x speed lead over Blackwell on GPT-OSS-120B today, but this must be defended.

TSMC single-source: WSE-3 is fabricated exclusively on TSMC 5nm. No alternative exists for wafer-scale manufacturing. Any TSMC disruption would halt production.

Execution on buildout: 750MW for OpenAI + AWS Bedrock integration requires massive parallel capex. Cerebras has raised $2.1B in 5 months partly to fund this, but delays would reduce near-term revenue recognition.

Software ecosystem: Nvidia’s CUDA remains deeply entrenched. CSoft and the Cerebras developer platform are growing (#1 on HuggingFace, 5M+ monthly requests) but are still nascent vs. CUDA’s 20-year head start.

Conclusion: the asymmetric opportunity

Cerebras represents a rare asymmetric opportunity. The downside is bounded by $10B+ in contracted OpenAI backlog, exclusive AWS Bedrock integration, and validated technology (20x faster inference, #1 on HuggingFace). The upside is substantial if the company becomes the de facto inference standard for a market projected to reach $255B by 2030.

The key insight the market may be underpricing: inference is not a commodity where Nvidia automatically wins. Unlike training (where CUDA lock-in is extreme), inference is workload-by-workload, latency-sensitive, and architecturally favors single-chip solutions. The AWS disaggregated architecture (Trainium for prefill, CS-3 for decode) may become the industry standard — and every Bedrock customer becomes a potential Cerebras revenue source.

At $23B on forward FY2026 revenue of $3.9B, Cerebras trades at 5.9x — a fraction of comparable AI infrastructure multiples. With six hyperscaler relationships, the strongest pre-IPO customer roster of any AI hardware company in history, and the only independent high-speed inference platform left standing after Nvidia’s Groq acquisition, the path to $65–140B+ market cap within 2–5 years is credible.

⚠️ Disclaimer: This report is for informational purposes only and does not constitute investment advice, a recommendation, or a solicitation to buy or sell any security. All revenue figures, projections, and price targets are based on assumptions and estimates that may prove materially incorrect. Past performance is not indicative of future results. The author holds a small position in Cerebras purchased through secondary markets and may have a financial interest in the subject matter discussed. Do your own diligence before making any investment decisions.